The database problem we solved with replica technique

Overview

As the database is growing bigger, there is a higher chance for latency and low efficiency to come into consideration. The heavy workload should not be handled by one main database anymore. Instead, a database replica is designed to cover and divide the workload into small pieces.

Note: This document will not go in-depth with the replica technology.

Transaction timeout

Transaction Timeout: Transaction timeout refers to a mechanism in a database system that automatically cancels or rolls back a transaction if it exceeds a specified time limit. It is a way to prevent long-running or stuck transactions from holding resources indefinitely and causing performance issues or deadlocks in the system. There are some reasons behind this problem:

- Long-running queries: If a transaction includes one or more queries that take significant time to execute, it can exceed the transaction timeout.

- Lock contention: If a transaction waits for a lock on a resource held by another transaction for an extended period, it can exceed the transaction timeout. This can occur when multiple transactions access the same resources simultaneously, leading to contention and delays.

Heavy read workload

Read query can take up access to a resource, so other write operations must wait before the resource is released. As more users or processes attempt to access data simultaneously, the strain on the database's CPU, memory, and I/O capacity can result in slower response times and a degraded user experience. Furthermore, maintaining data consistency and accuracy under such loads adds another layer of complexity to database management.

Replica usage

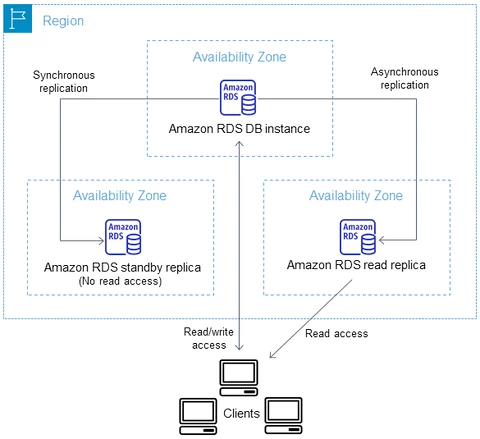

To ease the query workload on the tables, we apply the database replication technique. The concept is instead of having only one database, one or many replicas of the database are created. Therefore any Read requests to the database server will be directed to the replicas and master (optional). The write operation does not necessarily wait for the read operation to work on the resource anymore. However, any other write operations can still take up the resources.

.png)

Ref: AWS Read Replica

The reference to AWS

- Besides the replicas for request distribution, one of the best practices for database architecture in AWS is building a StandBy replica.

- This replica is only used for fail-over cases which is always inactive. Therefore, this replica will not handle read requests.

- From the diagram below, we can see that it applies the synchronization replication technique.

- In AWS, the latency cost is less than 100 mili-seconds (refers to Aurora Replica). Even though this may be counted near real-time, we already witnessed some inconsistent data before.

Fig 2. Ideal Architecture

Ref: AWS Read Replica

Code sample

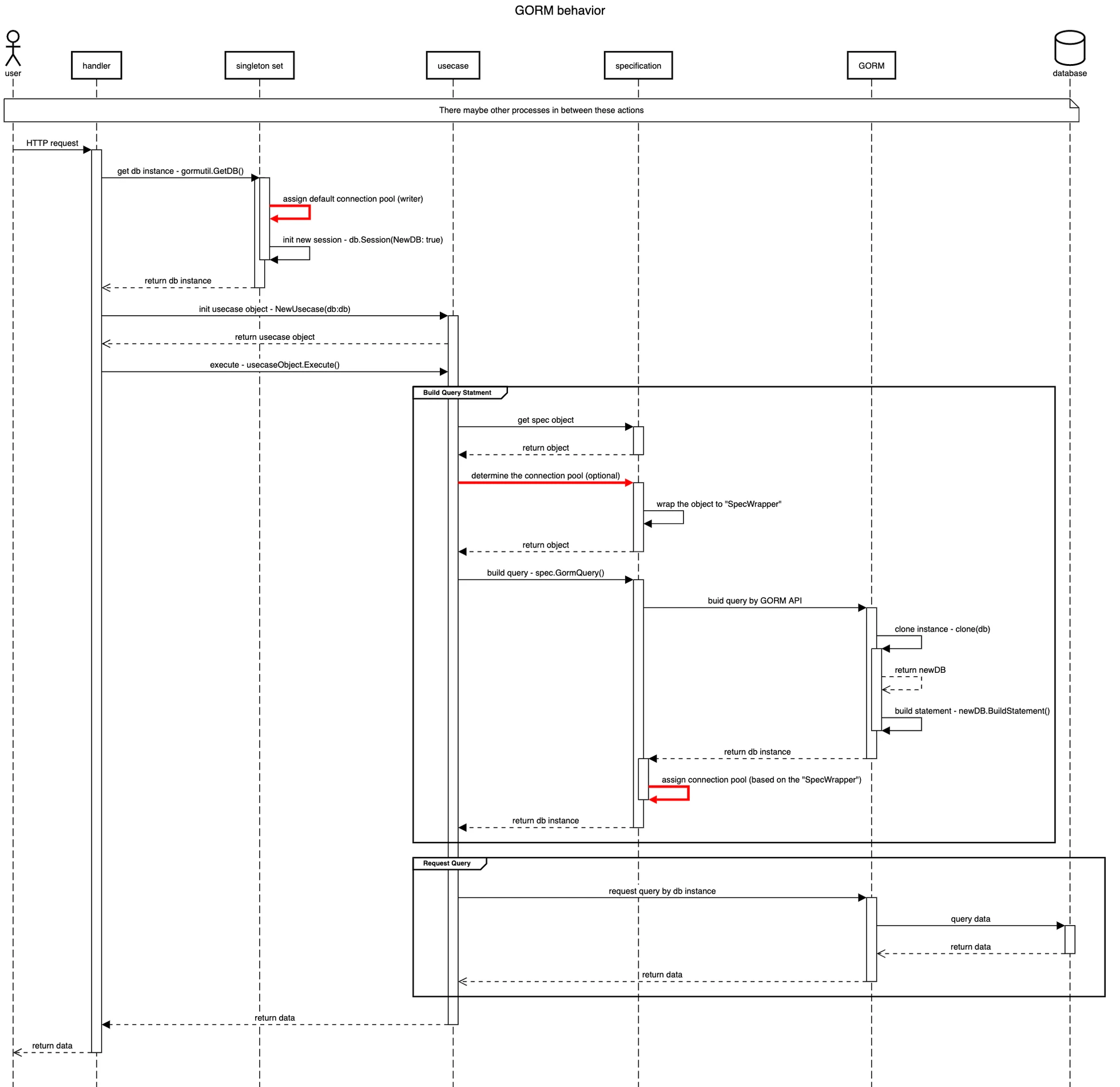

Firstly, let’s discuss the way we interact with our database in the project. We are leveraging GORM which is an ORM library providing a set of APIs and tools to automate the mapping process and handle the communication between the application and the database.

Simply explain, we will make any query requests to the database by a GORM instance. This instance is a singleton which is used by all the usecases that need to communicate with the database server. From the coding perspective, the singleton instance being accessed concurrently can lead to a race condition, so GORM already handles this problem by cloning the instance by itself (hidden in the package).

Note: What we mean by cloning is creating an entirely new object with remaining important information except the statement that has been built before.

Inherit from the idea of cloning a new database instance, we now apply the approach from GORM’s document suggestion which explicitly creates a new session. The session is simply a way of creating a new ORM instance that has some customized configuration. In addition, the session config contains a field - NewDB - which allows the instance to clone itself. Then when we make a query request to the database through the GORM package, we assign the database node to handle that query.

From the sequence diagram, we can find three places to assign the replica for the query to operate on.

- Getting singleton: Assign the connection and create a new session to preserve the cloning ability.

- We always want to set the primary database as default. The reason behind this is to minimize the risk after applying replica.

- At the usecase layer:

- At this layer, the query will be optionally assigned to a replica if needed. The query is wrapped in a custom type which is called "SpecWrapper"

- At specification layer:

- Determine to assign the replica if the spec is wrapped in “SpecWrapper”. This layer will execute the code of deciding which database node will handle the work.

GORM Query Redirection

- GORM always directs read requests to the replica if there are any, unless the current request belongs to the transaction.

- To determine which replica to operate on (there is more than one replica), GORM uses a default policy which is called “Random Policy”. As the name implies, GORM randomly selects a replica to work on.

- Therefore to manually manipulate the distribution of read requests to the master, we configure the connections pool for replicas to include the master.

Observation

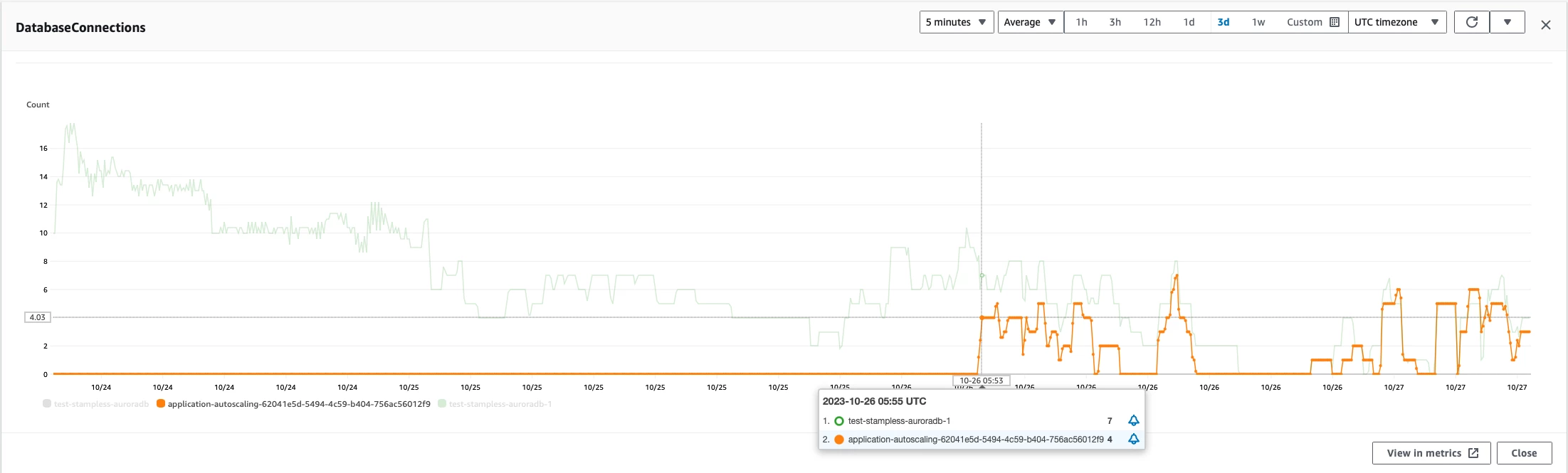

At the beginning of our project, while the traffic is still low, only one primary database is enough. However, there is still a limit and when it comes, to some defined thresholds have been reached followed by a decrease in performance. Therefore, one of the best solutions we concluded is applying the replication technique to solve the problem. Under our observation, the database connections have been reduced for the master node which eases the stress for our database. Even if the issue reappears, thanks to replicas, we simply scale out the database without the effect on our application.

Thoughts

In conclusion, applying the replica technique offers notable advantages such as improved performance due to load distribution, higher availability in case of outages, and better data protection. However, these benefits come at the cost of increased complexity in system management, potential data consistency issues, and higher operational expenses. Ultimately, while replicas can greatly enhance a website's reliability and user experience, they require careful implementation and ongoing maintenance to ensure they deliver their intended advantages effectively.

Tips to ensure the quality of CSV Import feature in Web Applications